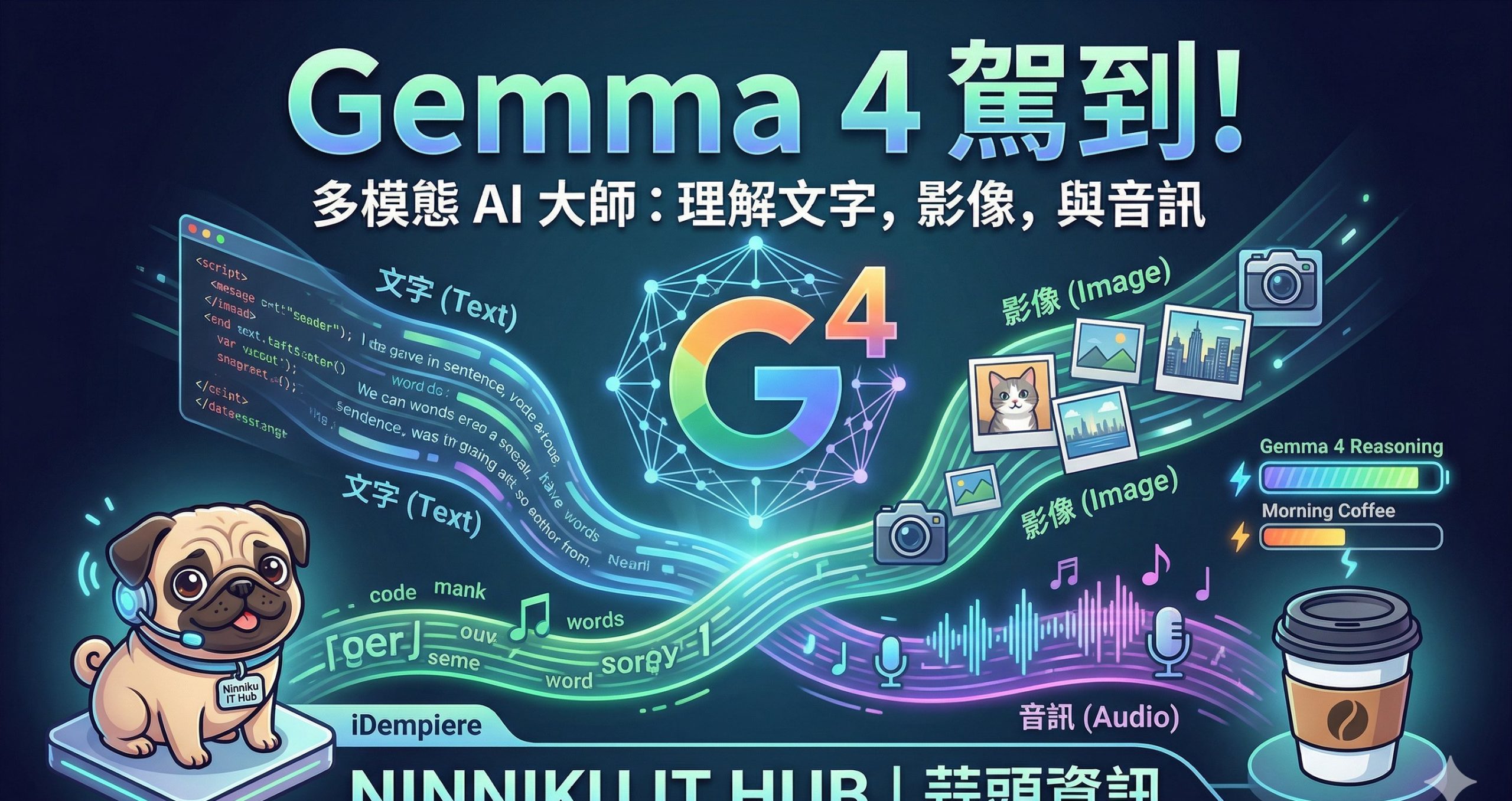

Gemma 4 登場了!這不只是另一個 AI 模型,它是能理解文字、影像與音訊的多模態大師。它的推理能力強到讓你懷疑人生,甚至比你早上的咖啡還要聰明。

什麼是多模態 (Multimodality)?

想像一下,如果你只能讀書,卻看不見世界的色彩,也聽不到美妙的音樂,那該有多寂寞?傳統的 AI 模型大多是『單模態』的,它們像是一個躲在暗室裡的學者,只能處理文字。但人類的感官是全方位的:我們看著路邊的招牌,聽著街道的 喧囂,甚至能從香氣中分辨食物。這就是『多模態』的魅力。

Gemma 4 的多模態能力意味著它不再僅僅是處理字符的機器,它能直 接『看』懂圖片中的細節(比如你的貓是不是在偷吃罐頭),『聽』懂音訊中的情緒與語氣,並將這些感官資訊與文字邏輯完美整合。這種 跨維度的理解能力,讓它在處理複雜任務時,不再需要笨拙地透過文字描述來轉譯影像,實現了真正的『感官融合』。

Gemma 4 家族:從大腦到指尖的演進

Gemma 4 並不是單一模型,而是一個精心設計的家族,每個成員都 有其專屬的『戰鬥領域』。根據你的需求,你可以選擇最適合的夥伴:

- Dense (稠密) 模型: 這是家族中的『重量級學霸』。擁有龐大的參數規模,專精於極高難度的邏輯推理、程式碼編寫與深層知識問答。如果你 需要處理極其複雜的研究論文或開發大型軟體系統,請毫不猶豫地選擇它。

- MoE (Mixture of Experts) 模型: 這就像是一群專業領域專家的集合。它透過『專家路由』機制,在處理特定任務時只啟動最相關的專家參數,因此在保持極高智慧的同時, 效率比傳統稠密模型更高。它非常適合需要平衡『極高智慧』與『執行效率』的自動化工作流。

- Edge (邊緣) 模型 (如 E2B): 這是家族中的『行動特種部隊』。經過極度優化,可以在你的筆電、 甚至你的智慧型手機上流暢運行。它不需要依賴龐大的雲端伺服器,隱私性極高且反應極快,非常適合部署在 IoT 設備或需要即時反應的移動應用中。

應用場景:從辦公室到你的口袋

有了 Gemma 4,各種想像力爆棚的場景都變成了現實:

- 自動化代理 (Autonomous Agents): 結合多模態理解,你可以建立一個能『看著』你的電腦螢幕並根據指令自動操作軟體的 AI 助手。

- 智能開發 (AI-Powered Coding): 它能理解程式碼架構,甚至能看懂設計稿(UI Design),直接幫你把 Figma 稿轉化為初步的 HTML/CSS 程式碼。

- 智能監控與安全: 透過邊緣模型,智慧攝影機可以即時分析影像與聲音,判斷環境是否發生異常,並在發現危險時迅速反應。

準備好迎接 AI 的新時代了嗎?Gemma 4 已經準備就緒,就等您來驅動!

Gemma 4 is Here!

Gemma 4 has arrived! It is not just another AI model, but a multimodal maestro capable of understanding text, images, and audio. Its reasoning capabilities are so strong, they might even make you question your existence—and it’s definitely smarter than your morning coffee.

What is Multimodality?

Imagine if you could only read books but never see the vibrant colors of a sunset or hear the melody of a song. That would be a lonely existence, wouldn’t it? Traditional AI models are mostly ‘unimodal’—they are like scholars locked in a dark room, capable only of processing text. But human senses are holistic: we observe signs, hear the bustle of the streets, and even distinguish flavors through smell.

Gemma 4’s multimodality means it is no longer just a text-processing machine. It can ‘see’ the intricate details in images (like checking if your cat is sneaking extra treats), ‘hear’ the nuances in audio, and seamlessly integrate these sensory inputs with linguistic logic. This cross-dimensional understanding eliminates the clumsy need to translate images into text descriptions, achieving true ‘sensory fusion.’

The Gemma 4 Family: From Giants to Edge Dwellers

Gemma 4 isn’t a single model; it’s a meticulously designed family, with each member having its own specialized ‘battlefield.’ Depending on your needs, you can choose the perfect partner:

- Dense Models: The ‘heavyweight scholars’ of the family. With massive parameter counts, they excel at high-difficulty logical reasoning, complex coding, and deep knowledge retrieval. If you are tackling advanced research papers or large-scale software engineering, this is your go-to.

- MoE (Mixture of Experts) Models: Think of this as a gathering of specialized experts. Through a ‘router’ mechanism, it activates only the relevant expert parameters for a specific task. This provides extremely high intelligence while maintaining much higher efficiency than traditional dense models. Perfect for automated workflows requiring both brilliance and speed.

- Edge Models (e.for E2B): The ‘mobile special forces.’ Highly optimized to run smoothly on your laptop or even your smartphone. They don’t rely on massive cloud servers, offering high privacy and low latency. Ideal for IoT deployment or real-scale mobile applications.

Application Scenarios: From the Office to Your Pocket

With Gemma 4, incredible scenarios become reality:

- Autonomous Agents: By leveraging multimodal understanding, you can build AI assistants that ‘watch’ your computer screen and execute tasks based on visual and textual instructions.

- AI-Powered Development: It can comprehend code architecture and even ‘see’ design mockups (UI Design), potentially translating Figma designs into functional HTML/CSS.

- Intelligent Monitoring & Security: Using edge models, smart cameras can analyze video and audio in real-time to detect anomalies and react instantly to security threats.

Ready to embrace the new era of AI? Gemma 4 is ready—are you?

Gemma 4 登場!

Gemma 4 が登場しました!これは単なる AI モデルではありません。テキスト、画像、音声を理解できるマルチモーダル・マエストロです。そ の推論能力は、あなたの朝のコーヒーよりも賢いかもしれません。

マルチモーダルとは何か?

想像してみてください。もし本を読むことはできても、夕日の鮮やかな色 を見ることができず、美しい音楽を聴くこともできないとしたら、それはとても寂しいことだと思いませんか?従来の AI モデルの多く は「単一モーダル」です。暗い部屋に閉じこもった学者のように、テキストしか処理できません。しかし、人間の感覚は多角的です。私 たちは看板を目にし、街の喧騒を聴き、さらには香りで食べ物を識別します。これが「マルチモーダル」の魅力です。

Gemma 4 のマルチモーダル機能は、単なるテキスト処理マシンではないことを意味します。画像の細かなディテール(例えば、猫がちゅ〜るを盗 み食いしていないか)を「見る」ことができ、音声のニュアンスを「聴き」、これらの感覚情報を論理的なテキストと完璧に統合できま す。この次元を超えた理解力により、画像をテキストで説明するという不器用な作業を必要とせず、真の「感覚の融合」を実現します。

Gemma 4 ファミリー:巨大な知能からエッジまで

Gemma 4 は単一のモデルではなく、慎重に設計されたファミリーです。各メンバーには、それぞれの「得意分野」があります。ニーズに合わせて 最適なパートナーを選択できます:

- Dense(高密度)モデル: ファミリーの「重量級のエリート」です。膨大なパラメータ数により、非常に難易度の高い論理推論、プログラミング、深い知識の検索 に優れています。高度な研究論文や大規模なシステム開発には、このモデルが最適です。

- MoE (Mixture of Experts) モデル: これは「専門家の集まり」のようなものです。「ルーター」メカニズムを通じて、特定のタスクに対して関連する専門家パラメータのみ を起動します。非常に高い知能を維持しながら、従来のモデルよりもはるかに高い効率性を実現しています。高度な知能と実行効率のバ ランスが必要な自動化ワークフローに最適です。

- Edge (エッジ) モデル(例:E2B): ファミリーの「機動部動隊」です。極限まで最適化されており、ノート PC やスマートフォンでもスム ーズに動作します。巨大なクラウドサーバーに依存せず、プライバシー性が高く、反応も極めて速いため、IoT デバイスやモバイルアプリへの展開に最適です。

活用シーン:オフィスからポケットの中まで

Gemma 4 があれば、想像を超えるシナリオが現実になります:

- 自律型エージェント (Autonomous Agents): マルチモーダル理解を活用して、コンピュータの画面を「見て」、視覚的・テキスト的な指示に基づいて操作を行う AI アシスタントを構築できます。

- AI 駆動型開発 (AI-Powered Coding): コードの構造を理解し、さらにはデザイン案(UI デザイン)さえも「見る」ことができるため、Figma のデザインを HTML/CSS コードへ変換するような作業も可能です。

- インテリジェントな監視とセキュリティ: エッジモデルを使用することで、スマートカメラが映像 と音声をリアルタイムで解析し、異常を検知して即座に反応することが可能になります。

AI の新時代を迎える準備はできていますか?Gemma 4 は準備万端です。あとは、あなたが使いこなすだけです!